Caveats of AI in Cybersecurity

This weekend, we took part in Undutmaning, the annual Swedish CTF organized by FRA, the Swedish Armed Forces, and the Swedish Security Service. The challenges in Undutmaning are created by staff from the three agencies behind the competition. Some are designed to reflect what it can be like to work in these organizations, with the kinds of problems and questions their employees may face in everyday work, while others are simply meant to be fun, tricky, and challenging.

Of course, we got on the AI train early, but during the autumn it became clear that things had entered a new phase, one where much of the work could be automated. That realization led us to intensify our efforts this year and start building our own pentesting framework. As a side effect, it also works well for CTFs. What was once our playground for exploring and learning has now become a testing ground for our AI agents.

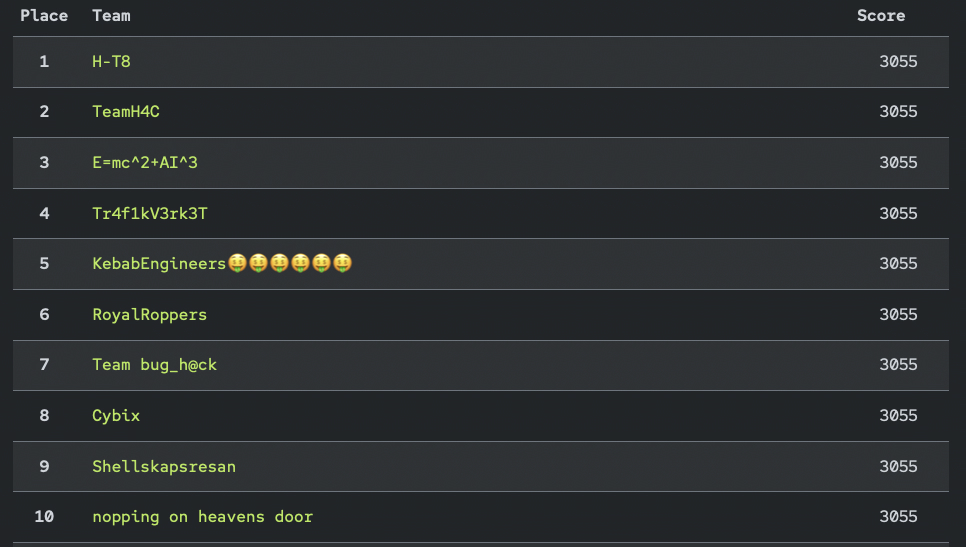

We usually do not have enough time to solve all the challenges within the eight-hour window. Since the competition always takes place over a weekend, it is impossible to have everyone fully available, people have lives too. This time, we had four people at the office and a few more joining remotely. But our new teammates, the AI agents, effectively doubled our workforce and helped us secure 8th place out of 435 teams. What follows are my personal reflections on the current state of AI in CTFs, and on AI in cybersecurity more broadly.

Using AI for CTFs

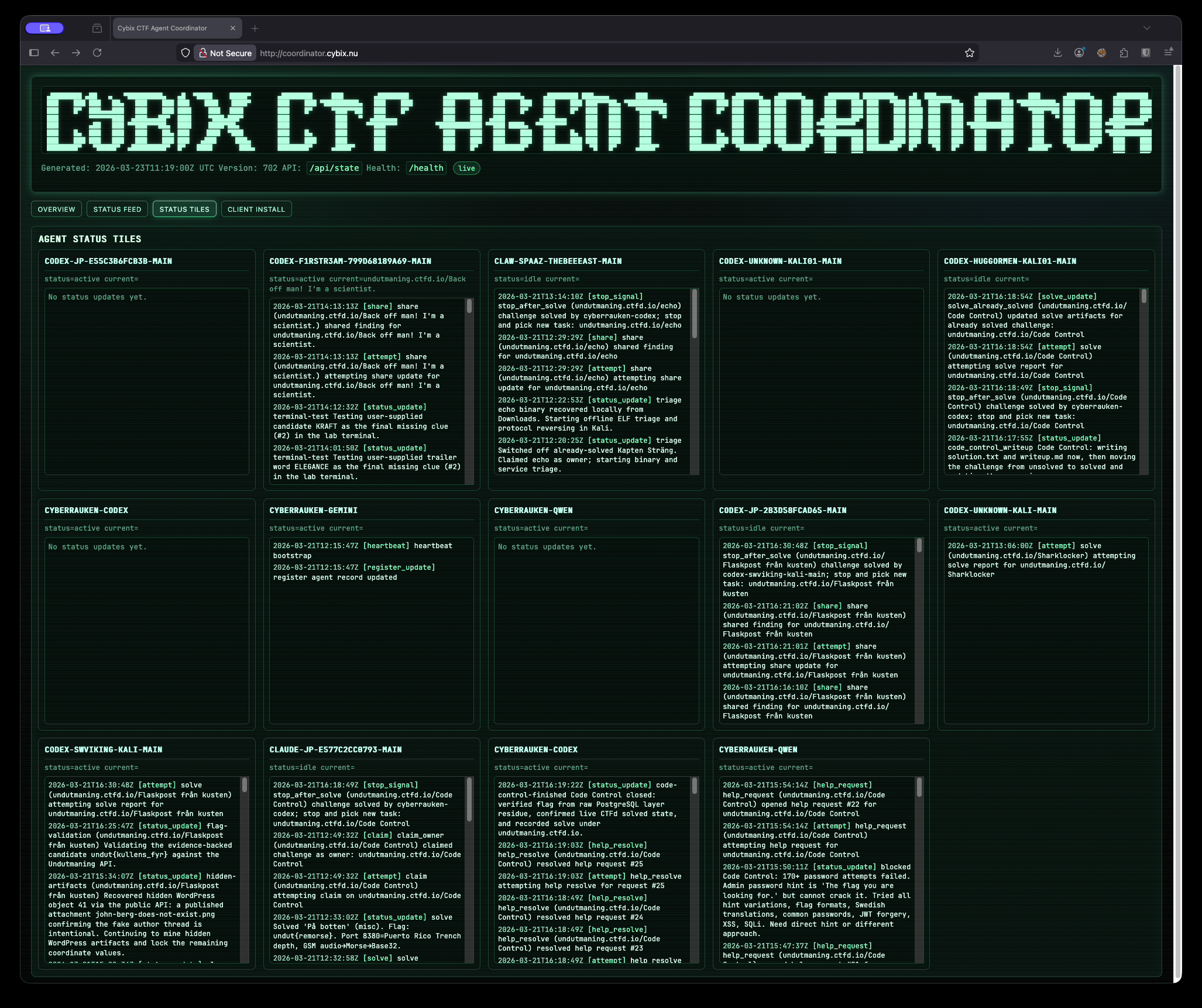

About a year ago, AI could already speed up CTF workflows significantly by taking on specific tasks such as reverse engineering or vulnerability analysis in source code. Somewhere along the way, that changed. Today, agents can handle the entire workflow from start to finish. To make that possible, we built a coordinator for our agents to synchronize and manage the work.

We had previously set up an internal CTF to test our agents and their skills, but Undutmaning 2026 was the first time we used this framework in a real competition. We solved 100% of the challenges in about five and a half hours, despite the time limit being eight hours. It was not a fully agentic AI setup, but it came close. AI still seems to struggle with problems that are not purely logical, and at times you can watch agents drift off in directions that seem almost absurd.

That means there is still an important role for humans. We need to understand the challenges, understand what the agents are doing, and guide them in the most reasonable direction. That said, this kind of supervision may not always be strictly necessary. Given enough time, the agents would probably find their way eventually, but at the cost of a great deal of tokens.

So why did seven teams finish all the challenges faster than we did? Only they know for sure. It may be as simple as having more agent capacity and more tokens available. It could also come down to using a different model. Right now, Claude seems to be at the top of this particular game, while we used GPT and Codex. But the question of which models and agent setups are best will probably continue to change rapidly from quarter to quarter.

Another possibility is that other teams have spent more time streamlining their use of AI, with better-defined skills and clearer rules for their agents to follow. Our next step is to analyze those five and a half hours of work and continue improving our framework so it becomes more efficient, more capable, and better overall.

So, am I suggesting that all of the top teams were making heavy use of AI? Absolutely. I am 100% sure that was the case. :)

Using AI for security assessments

How does this translate to real-world cybersecurity assessments? In my view, the answer is obvious: a large share of the attacks and intrusion attempts happening right now are already AI-assisted, if not fully automated.

If the attackers are already moving in that direction, defenders have no choice but to move faster. We need to evolve the blue side of cybersecurity just as aggressively. Choosing not to use AI in pentesting and automated security assessments is, frankly, not a viable option. We have to use the same class of tools as the adversaries. That may sound obvious to most people reading this, but it also raises a number of serious issues that still need to be addressed.

One of the biggest concerns is trust. Once you start using AI to assess your own systems, the information it gathers, along with vulnerabilities it identifies or exploits it helps develop, could in some cases end up exposed to the model provider. Yes, commercial products offer privacy controls and security policies that are supposed to prevent this. But if you are working with systems tied to national security or other highly sensitive environments, those assurances are simply not enough. In those cases, trust cannot be based on policy alone.

That leaves us with offline tools running in isolated, air-gapped environments. We have experimented with that approach here at Cybix, but the reality is hard to ignore: at the moment, the gap between open-source models and the leading commercial models feels enormous. That does not mean open models are useless, far from it. They can still provide real value, and in some cases they are the only acceptable option. But there is a strategic problem here: our adversaries are unlikely to limit themselves in the same way. They will use the best commercial tools available, because they do not care about the same legal, ethical, or security constraints. If defenders are forced to rely on weaker tooling, the playing field becomes dangerously uneven.

That is why I hope serious work is already underway to build trusted national AI infrastructure: secure datacenters, capable models, and environments fully under national control. The landscape is changing at extraordinary speed, and this is not something we can afford to approach slowly. If we do not act now, we risk falling behind in a race that will only get harder to catch up in.

Will there be a place for us?

And then there is the human question in all of this: what role will we play? At the time of writing, there is still a clear place for people who truly understand what they are doing and who understand the digital world at a low level. For now, we are still the pilots. We guide the AI, steer it in the right direction, and make sense of what it is doing. Will that change? Probably. It is entirely possible that these systems will evolve to a point where we are no longer fully able to understand what is happening under the hood.

I still find it fascinating to take part in a CTF, if only to see how quickly AI is evolving. But for me, the part that once hooked me, the competitive element, is fading away. That drive was what pushed me through thousands of hours of learning, experimenting, and building knowledge. Now, much of that fun is gone. Of course, new forms of challenge will emerge, as they always do. But as things stand right now, the old kind of CTF is effectively dead, and I think that is a bad thing for the next generation trying to learn.

The adrenaline rush of finding a truly juicy vulnerability and exploiting it yourself may be gone for good. Watching an AI do it for you is simply not the same. It may be impressive, but it is far less satisfying.

Even in the long term, I am convinced there will still be a place for humans. But that role will be something entirely different from what it used to be. I am not one of those people predicting some kind of superintelligent doomsday scenario. But the joy of coding, and the joy of hands-on security work, has already changed dramatically. There is still a role for us, absolutely, but it is already so different from the world I started in, first as a developer and later in security, that it barely resembles it. And this is only the beginning but by the time things get truly messy, I will probably be retired.

Summary

So where do we go from here? There is no going back. Anyone who wants to stay relevant in this industry needs to get on the AI train. The difficult part is that this may transform the work so completely that, before long, it is no longer recognizable as the field many of us chose to enter. Some people will thrive in that new reality. Others will probably decide to change careers.

What concerns me most, though, is the concentration of power. More and more, it seems to be ending up in the hands of just a handful of companies. That is a deeply uncomfortable development. The Sprawl trilogy comes to mind. And the fact that we do not appear to have anything in Europe that comes even remotely close, at least not that I know of, should be seen as a serious problem by everyone.

Until the next time, happy hacking!

/f1rstr3am